Back in June, Microsoft struck deals with the University of California and the University of Toronto to scan titles from their nearly 50 million (combined) books into its Windows Live Book Search service. Today, the Guardian reports that they’ve forged a new alliance with Cornell and are going to step up their scanning efforts toward a launch of the search portal sometime toward the beginning of next year. Microsoft will focus on public domain works, but is also courting publishers to submit in-copyright books.

Making these books searchable online is a great thing, but I’m worried by the implications of big coprorations building proprietary databases of public domain works. At the very least, we’ll need some sort of federated book search engine that can leap the walls of these competing services, matching text queries to texts in Google, Microsoft and the Open Content Alliance (which to my understanding is mostly Microsoft anyway).

But more important, we should get to work with OCR scanners and start extracting the texts to build our own databases. Even when they make the files available, as Google is starting to do, they’re giving them to us not as fully functioning digital texts (searchable, remixable), but as strings of snapshots of the scanned pages. That’s because they’re trying to keep control of the cultural DNA scanned from these books — that’s the value added to their search service.

But the public domain ought to be a public trust, a cultural infrastructure that is free to all. In the absence of some competing not-for-profit effort, we should at least start thinking about how we as stakeholders can demand better access to these public domain works. Microsoft and Google are free to scan them, and it’s good that someone has finally kickstarted a serious digitization campaign. It’s our job to hold them accountable, and to make sure that the public domain doesn’t get redefined as the semi-public domain.

Category Archives: digitization

google and the future of print

Veteran editor and publisher Jason Epstein, the man who first introduced paperbacks to American readers, discusses recent Google-related books (John Battelle, Jean-Noël Jeanneney, David Vise etc.) in the New York Review, and takes the opportunity to promote his own vision for the future of publishing. As if to reassure the Updikes of the world, Epstein insists that the “sparkling cloud of snippets” unleashed by Google’s mass digitization of libraries will, in combination with a radically decentralized print-on-demand infrastructure, guarantee a bright future for paper books:

[Google cofounder Larry] Page’s original conception for Google Book Search seems to have been that books, like the manuals he needed in high school, are data mines which users can search as they search the Web. But most books, unlike manuals, dictionaries, almanacs, cookbooks, scholarly journals, student trots, and so on, cannot be adequately represented by Googling such subjects as Achilles/wrath or Othello/jealousy or Ahab/whales. The Iliad, the plays of Shakespeare, Moby-Dick are themselves information to be read and pondered in their entirety. As digitization and its long tail adjust to the norms of human nature this misconception will cure itself as will the related error that books transmitted electronically will necessarily be read on electronic devices.

Epstein predicts that in the near future nearly all books will be located and accessed through a universal digital library (such as Google and its competitors are building), and, when desired, delivered directly to readers around the world — made to order, one at a time — through printing machines no bigger than a Xerox copier or ATM, which you’ll find at your local library or Kinkos, or maybe eventually in your home.

Predicated on the “long tail” paradigm of sustained low-amplitude sales over time (known in book publishing as the backlist), these machines would, according to Epstein, replace the publishing system that has been in place since Gutenberg, eliminating the intermediate steps of bulk printing, warehousing, retail distribution, and reversing the recent trend of consolidation that has depleted print culture and turned book business into a blockbuster market.

Predicated on the “long tail” paradigm of sustained low-amplitude sales over time (known in book publishing as the backlist), these machines would, according to Epstein, replace the publishing system that has been in place since Gutenberg, eliminating the intermediate steps of bulk printing, warehousing, retail distribution, and reversing the recent trend of consolidation that has depleted print culture and turned book business into a blockbuster market.

Epstein has founded a new company, OnDemand Books, to realize this vision, and earlier this year, they installed test versions of the new “Espresso Book Machine” (pictured) — capable of producing a trade paperback in ten minutes — at the World Bank in Washington and (with no small measure of symbolism) at the Library of Alexandria in Egypt.

Epstein is confident that, with a print publishing system as distributed and (nearly) instantaneous as the internet, the codex book will persist as the dominant reading mode far into the digital age.

carbon and silver

Carbon and Silver is a small show of Walker Evans‘ 1935-36 photographs at the UBS gallery in New York. The purpose of this exhibit is to compare printing technologies. It focuses primarily on ink-jet prints in relation to gelatin silver prints, with a small sample of books side by side with their digitally printed counterparts, revealing how lithography literally pales next to the crispness of the digital.

Carbon and Silver is a small show of Walker Evans‘ 1935-36 photographs at the UBS gallery in New York. The purpose of this exhibit is to compare printing technologies. It focuses primarily on ink-jet prints in relation to gelatin silver prints, with a small sample of books side by side with their digitally printed counterparts, revealing how lithography literally pales next to the crispness of the digital.

The show invites meditations on the “authenticity” of reproductions, especially in a medium such as photography, in itself based upon printing technology. In this show it is quite difficult to discern the original from the copy, and one questions, as Baudrillard would have it, to which point the copy has come to replace the original.

Most of the prints exhibited at the UBS belong to Evans’ body of work documenting the effect of the Great Depression on rural families for the Farm Security Administration in 1935-36. As a photographer, he was not particularly interested in producing his own prints as his main interest was to record information. These photographs were originally printed by the FSA as visual evidence reinforcing the New Deal.

Most of the prints exhibited at the UBS belong to Evans’ body of work documenting the effect of the Great Depression on rural families for the Farm Security Administration in 1935-36. As a photographer, he was not particularly interested in producing his own prints as his main interest was to record information. These photographs were originally printed by the FSA as visual evidence reinforcing the New Deal.

Evans’ own interpretation of them appeared in American Photographs, the book that accompanied his exhibition at the Museum of Modern art (the first one-man photography show ever mounted by a major museum.) John T. Hill says in this exhibition’s catalogue that Evans “scrupulously controlled corrections of the printing plates, and using this process as an extension of his darkroom became a habit.” The interesting thing is that he understood that the book has a permanence that the exhibition does not.

Evans’ photographs have such crispness that they lend themselves to reproduction, even when the print is less than perfect. As a master of his medium he was absolutely aware of the difficulties of rendering full tonal scale in a black and white print. The ink-jet prints in this show are so remarkably close to their gelatin silver sisters that the viewer has to go back and forth from print to print in order to discern any possible difference. Evans loved the detail that an enlarged print brings out and the enlarged digital prints in this exhibition certainly do that.

Hill sums up the advantages of technology without denigrating the magnificence of the original process:

All new media affect voice and timbre. A greater tonal scale and more precise control of values are the two most significant tools offered by digital technology. The information so difficult to maintain in the dark and light ends of the scale using gelatin silver materials is now printable. Gelatin silver has been replaced by carbon black pigments laid onto archival paper. The music is the same; certain subtle notes are now heard more clearly.

google to scan spanish library books

The Complutense University of Madrid is the latest library to join Google’s digitization project, offering public domain works from its collection of more than 3 million volumes. Most of the books to be scanned will be in Spanish, as well as other European languages (read more in Reuters , or at the Biblioteca Complutense (en espagnol)). I also recently came across news that Google is seeking commercial partnerships with english-language publishers in India.

The Complutense University of Madrid is the latest library to join Google’s digitization project, offering public domain works from its collection of more than 3 million volumes. Most of the books to be scanned will be in Spanish, as well as other European languages (read more in Reuters , or at the Biblioteca Complutense (en espagnol)). I also recently came across news that Google is seeking commercial partnerships with english-language publishers in India.

While celebrating the fact that these books will be online (and presumably downloadable in Google’s shoddy, unsearchable PDF editions), we should consider some of the dynamics underlying the migration of the world’s libraries and publishing houses to the supposedly placeless place we inhabit, the web.

No doubt, Google’s scanners are aquiring an increasingly global reach, but digitization is a double-edged process. Think about the scanner. A photographic technology, it captures images and freezes states. What Google is doing is essentially photographing the world’s libraries and preparing the ultimate slideshow of human knowledge, the sequence and combination of the slides to be determined each time by the queries of each reader.

But perhaps Google’s scanners, in their dutifully accurate way, are in effect cloning existing arrangements of knowledge, preserving cultural trade deficits, and reinforcing the flow of knowledge power — all things we should be questioning at a time when new technologies have the potential to jigger old equations.

With Complutense on board, we see a familiar pyramid taking shape. Spanish takes its place below English in the global language hierarchy. Others will soon follow, completing this facsimile of the existing order.

what’s important to save

Rick Prelinger and Megan Shaw visited for lunch earlier in the week and gave us a preview of the very interesting presentation they made later that day about the SF-based Prelinger Library . Beginning in the 70s Rick started collecting film and video that no one else seemed to want — industrials (e.g. GM’s worldfair and auto show films), educational films (think “how to be popular”, “how to be a good citizen” and how to make the perfect jelly), and filmed advertisements to be shown in movie theaters and early TV. Rick’s contention, as the first serious media archaeologist, was that these films that no one intended to be saved or seen again — ephemeral films — often provided much more insight about howsociety has evolved in the twentieth century than the big budget hollywood films which tend to be more self-conscious and indirect.

Below are a few clips from some of my favorites. the clip from “A Date With Your Family” contains one of the scariest moments i’ve ever encountered in film, when at the end of the clip, the narrator remarks as “father” returns from his day at the office . . . . that “these boys greet their dad AS THOUGH they are genuinely glad to see him, AS THOUGH they had really missed being away from him during the day . . . ” In the second clip, “A Young Man’s Fancy,” the daughter in a pensive mood says “I was just thinking” and the mother says incredulously, “thinking?” as if that’s the most outlandish thing she can imagine her daughter doing.

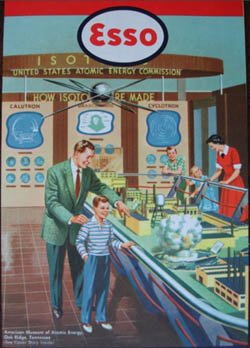

A few years ago, the Library of Congress, recognizing the inestimable value of Rick’s collection, bought the whole kit and caboodle. Since then, Rick and his partner, Megan Shaw have turned their attention to print, building a library of unusual books, periodicals and print ephemera; e.g. an invaluable collection of ESSO’s state maps from the fities that favors serendipitous browsing and remix. (the cover of the ESSO map below depicts a young boy being introduced by his dad to the wonders of nuclear fusion).

The following is from the description on the library’s website:

Though libraries live on (and are among the least-corrupted democratic institutions), the freedom to browse serendipitously is becoming rarer. Now that many research libraries are economizing on space and converting print collections to microfilm and digital formats, it’s becoming harder to wander and let the shelves themselves suggest new directions and ideas. Key academic and research libraries are often closed to unaffiliated users, and many keep the bulk of their collections in closed stacks, inhibiting the rewarding pleasures of browsing. Despite its virtues, query-based online cataloging often prevents unanticipated yet productive results from turning up on the user’s screen. And finally, much of the material in our collection is difficult to find in most libraries readily accessible to the general public.

While listening to Rick and Megan’s talk i had a minor AHA moment. a lot of our skepticism and concern about Google centers on the inherent dangers of a private company being entrusted with the care and feeding of our increasingly digitize culture. When Rick and Megan showed the cover of School Executives, a journal from the 40s, which featured an article on the value of teachers toting guns to enforce classroom discipline, i realized that Google’s digitization efforts focus entirely on codex books (maybe to be extended to periodicals that libraries have bothered to store). but the invaluable materials that might be called “print-based” ephemera — pamphlets, marketing materials, off-beat journals, zines etc. — will be absent in the future. The sad thing about this, as we know from Rick’s ephemeral film collection, is that often these pieces that were never meant to survive tell us more about how our culture evolved and how we’ve ended up where we are, than many self-conscious efforts conceived with permanence in mind.

showtiming our libraries

Google’s contract with the University of California to digitize library holdings was made public today after pressure from The Chronicle of Higher Education and others. The Chronicle discusses some of the key points in the agreement, including the astonishing fact that Google plans to scan as many as 3,000 titles per day, and its commitment, at UC’s insistence, to always make public domain texts freely and wholly available through its web services.

Google’s contract with the University of California to digitize library holdings was made public today after pressure from The Chronicle of Higher Education and others. The Chronicle discusses some of the key points in the agreement, including the astonishing fact that Google plans to scan as many as 3,000 titles per day, and its commitment, at UC’s insistence, to always make public domain texts freely and wholly available through its web services.

But there are darker revelations as well, and Jeff Ubois, a TV-film archivist and research associate at Berkeley’s School of Information Management and Systems, hones in on some of these on his blog. Around the time that the Google-UC deal was first announced, Ubois compared it to Showtime’s now-infamous compact with the Smithsonian, which caused a ripple of outrage this past April. That deal, the details of which are secret, basically gives Showtime exclusive access to the Smithsonian’s film and video archive for the next 30 years.

The parallels to the Google library project are many. Four of the six partner libraries, like the Smithsonian, are publicly funded institutions. And all the agreements, with the exception of U. Michigan, and now UC, are non-disclosure. Brewster Kahle, leader of the rival Open Content Alliance, put the problem clearly and succinctly in a quote in today’s Chronicle piece:

We want a public library system in the digital age, but what we are getting is a private library system controlled by a single corporation.

He was referring specifically to sections of this latest contract that greatly limit UC’s use of Google copies and would bar them from pooling them in cooperative library systems. I vocalized these concerns rather forcefully in my post yesterday, and may have gotten a couple of details wrong, or slightly overstated the point about librarians ceding their authority to Google’s algorithms (some of the pushback in comments and on other blogs has been very helpful). But the basic points still stand, and the revelations today from the UC contract serve to underscore that. This ought to galvanize librarians, educators and the general public to ask tougher questions about what Google and its partners are doing. Of course, all these points could be rendered moot by one or two bad decisions from the courts.

librarians, hold google accountable

I’m quite disappointed by this op-ed on Google’s library intiative in Tuesday’s Washington Post. It comes from Richard Ekman, president of the Council of Independent Colleges, which represents 570 independent colleges and universities in the US (and a few abroad). Generally, these are mid-tier schools — not the elite powerhouses Google has partnered with in its digitization efforts — and so, being neither a publisher, nor a direct representative of one of the cooperating libraries, I expected Ekman might take a more measured approach to this issue, which usually elicits either ecstatic support or vociferous opposition. Alas, no.

To the opposition, namely, the publishing industry, Ekman offers the usual rationale: Google, by digitizing the collections of six of the english-speaking world’s leading libraries (and, presumably, more are to follow) is doing humanity a great service, while still fundamentally respecting copyrights — so let’s not stand in its way. With Google, however, and with his own peers in education, he is less exacting.

The nation’s colleges and universities should support Google’s controversial project to digitize great libraries and offer books online. It has the potential to do a lot of good for higher education in this country.

Now, I’ve poked around a bit and located the agreement between Google and the U. of Michigan (freely available online), which affords a keyhole view onto these grand bargains. Basically, Google makes scans of U. of M.’s books, giving them images and optical character recognition files (the texts gleaned from the scans) for use within their library system, keeping the same for its own web services. In other words, both sides get a copy, both sides win.

If you’re not Michigan or Google, though, the benefits are less clear. Sure, it’s great that books now come up in web searches, and there’s plenty of good browsing to be done (and the public domain texts, available in full, are a real asset). But we’re in trouble if this is the research tool that is to replace, by force of market and by force of users’ habits, online library catalogues. That’s because no sane librarian would outsource their profession to an unaccountable private entity that refuses to disclose the workings of its system — in other words, how does Google’s book algorithm work, how are the search results ranked? And yet so many librarians are behind this plan. Am I to conclude that they’ve all gone insane? Or are they just so anxious about the pace of technological change, driven to distraction by fears of obsolescence and diminishing reach, that they are willing to throw their support uncritically behind the company, who, like a frontier huckster, promises miracle cures and grand visions of universal knowledge?

We may be resigned to the steady takeover of college bookstores around the country by Barnes and Noble, but how do we feel about a Barnes and Noble-like entity taking over our library systems? Because that is essentially what is happening. We ought to consider the Google library pact as the latest chapter in a recent history of consolidation and conglomeratization in publishing, which, for the past few decades (probably longer, I need to look into this further) has been creeping insidiously into our institutions of higher learning. When Google struck its latest deal with the University of California, and its more than 100 libraries, it made headlines in the technology and education sections of newspapers, but it might just as well have appeared in the business pages under mergers and acquisitions.

So what? you say. Why shouldn’t leaders in technology and education seek each other out and forge mutually beneficial relationships, relationships that might yield substantial benefits for large numbers of people? Okay. But we have to consider how these deals among titans will remap the information landscape for the rest of us. There is a prevailing attitude today, evidenced by the simplistic public debate around this issue, that one must accept technological advances on the terms set by those making the advances. To question Google (and its collaborators) means being labeled reactionary, a dinosaur, or technophobic. But this is silly. Criticizing Google does not mean I am against digital libraries. To the contrary, I am wholeheartedly in favor of digital libraries, just the right kind of digital libraries.

What good is Google’s project if it does little more than enhance the world’s elite libraries and give Google the competitive edge in the search wars (not to mention positioning them in future ebook and print-on-demand markets)? Not just our little institute, but larger interest groups like the CIC ought to be voices of caution and moderation, celebrating these technological breakthroughs, but at the same time demanding that Google Book Search be more than a cushy quid pro quo between the powerful, with trickle-down benefits that are dubious at best. They should demand commitments from the big libraries to spread the digital wealth through cooperative web services, and from Google to abide by certain standards in its own web services, so that smaller librarians in smaller ponds (and the users they represent) can trust these fantastic and seductive new resources. But Ekman, who represents 570 of these smaller ponds, doesn’t raise any of these questions. He just joins the chorus of approval.

What’s frustrating is that the partner libraries themselves are in the best position to make demands. After all, they have the books that Google wants, so they could easily set more stringent guidelines for how these resources are to be redeployed. But why should they be so magnanimous? Why should they demand that the wealth be shared among all institutions? If every student can access Harvard’s books with the click of a mouse, than what makes Harvard Harvard? Or Stanford Stanford?

Enlightened self-interest goes only so far. And so I repeat, that’s why people like Ekman, and organizations like the CIC, should be applying pressure to the Harvards and Stanfords, as should organizations like the Digital Library Federation, which the Michigan-Google contract mentions as a possible beneficiary, through “cooperative web services,” of the Google scanning. As stipulated in that section (4.4.2), however, any sharing with the DLF is left to Michigan’s “sole discretion.” Here, then, is a pressure point! And I’m sure there are others that a more skilled reader of such documents could locate. But a quick Google search (acceptable levels of irony) of “Digital Library Federation AND Google” yields nothing that even hints at any negotiations to this effect. Please, someone set me straight, I would love to be proved wrong.

Google, a private company, is in the process of annexing a major province of public knowledge, and we are allowing it to do so unchallenged. To call the publishers’ legal challenge a real challenge, is to misidentify what really is at stake. Years from now, when Google, or something like it, exerts unimaginable influence over every aspect of our informated lives, we might look back on these skirmishes as the fatal turning point. So that’s why I turn to the librarians. Raise a ruckus.

UPDATE (8/25): The University of California-Google contract has just been released. See my post on this.

u.c. offers up stacks to google

Less than two months after reaching a deal with Microsoft, the University of California has agreed to let Google scan its vast holdings (over 34 million volumes) into the Book Search database. Google will undoubtedly dig deeper into the holdings of the ten-campus system’s 100-plus libraries than Microsoft, which is a member of the more copyright-cautious Open Content Alliance, and will focus primarily on books unambiguously in the public domain. The Google-UC alliance comes as major lawsuits against Google from the Authors Guild and Association of American Publishers are still in the evidence-gathering phase.

Meanwhile, across the drink, French publishing group La Martiniè re in June brought suit against Google for “counterfeiting and breach of intellectual property rights.” Pretty much the same claim as the American industry plaintiffs. Later that month, however, German publishing conglomerate WBG dropped a petition for a preliminary injunction against Google after a Hamburg court told them that they probably wouldn’t win. So what might the future hold? The European crystal ball is murky at best.

During this period of uncertainty, the OCA seems content to let Google be the legal lightning rod. If Google prevails, however, Microsoft and Yahoo will have a lot of catching up to do in stocking their book databases. But the two efforts may not be in such close competition as it would initially seem.

Google’s library initiative is an extremely bold commercial gambit. If it wins its cases, it stands to make a great deal of money, even after the tens of millions it is spending on the scanning and indexing the billions of pages, off a tiny commodity: the text snippet. But far from being the seed of a new literary remix culture, as Kevin Kelly would have us believe (and John Updike would have us lament), the snippet is simply an advertising hook for a vast ad network. Google’s not the Library of Babel, it’s the most sublimely sophisticated advertising company the world has ever seen (see this funny reflection on “snippet-dangling”). The OCA, on the other hand, is aimed at creating a legitimate online library, where books are not a means for profit, but an end in themselves.

Brewster Kahle, the founder and leader of the OCA, has a rather immodest aim: “to build the great library.” “That was the goal I set for myself 25 years ago,” he told The San Francisco Chronicle in a profile last year. “It is now technically possible to live up to the dream of the Library of Alexandria.”

So while Google’s venture may be more daring, more outrageous, more exhaustive, more — you name it –, the OCA may, in its slow, cautious, more idealistic way, be building the foundations of something far more important and useful. Plus, Kahle’s got the Bookmobile. How can you not love the Bookmobile?

the myth of universal knowledge 2: hyper-nodes and one-way flows

My post a couple of weeks ago about Jean-Noël Jeanneney’s soon-to-be-released anti-Google polemic sparked a discussion here about the cultural trade deficit and the linguistic diversity (or lack thereof) of digital collections. Around that time, Rüdiger Wischenbart, a German journalist/consultant, made some insightful observations on precisely this issue in an inaugural address to the 2006 International Conference on the Digitisation of Cultural Heritage in Salzburg. His discussion is framed provocatively in terms of information flow, painting a picture of a kind of fluid dynamics of global culture, in which volume and directionality are the key indicators of power.

My post a couple of weeks ago about Jean-Noël Jeanneney’s soon-to-be-released anti-Google polemic sparked a discussion here about the cultural trade deficit and the linguistic diversity (or lack thereof) of digital collections. Around that time, Rüdiger Wischenbart, a German journalist/consultant, made some insightful observations on precisely this issue in an inaugural address to the 2006 International Conference on the Digitisation of Cultural Heritage in Salzburg. His discussion is framed provocatively in terms of information flow, painting a picture of a kind of fluid dynamics of global culture, in which volume and directionality are the key indicators of power.

First, he takes us on a quick tour of the print book trade, pointing out the various roadblocks and one-way streets that skew the global mind map. A cursory analysis reveals, not surprisingly, that the international publishing industry is locked in a one-way flow maximally favoring the West, and, moreover, that present digitization efforts, far from ushering in a utopia of cultural equality, are on track to replicate this.

…the market for knowledge is substantially controlled by the G7 nations, that is to say, the large economic powers (the USA, Canada, the larger European nations and Japan), while the rest of the world plays a subordinate role as purchaser.

Foreign language translation is the most obvious arena in which to observe the imbalance. We find that the translation of literature flows disproportionately downhill from Anglophone heights — the further from the peak, the harder it is for knowledge to climb out of its local niche. Wischenbart:

An already somewhat obsolete UNESCO statistic, one drawn from its World Culture Report of 2002, reckons that around one half of all translated books worldwide are based on English-language originals. And a recent assessment for France, which covers the year 2005, shows that 58 percent of all translations are from English originals. Traditionally, German and French originals account for an additional one quarter of the total. Yet only 3 percent of all translations, conversely, are from other languages into English.

…When it comes to book publishing, in short, the transfer of cultural knowledge consists of a network of one-way streets, detours, and barred routes.

…The central problem in this context is not the purported Americanization of knowledge or culture, but instead the vertical cascade of knowledge flows and cultural exports, characterized by a clear power hierarchy dominated by larger units in relation to smaller subordinated ones, as well as a scarcity of lateral connections.

Turning his attention to the digital landscape, Wischenbart sees the potential for “new forms of knowledge power,” but quickly sobers us up with a look at the way decentralized networks often still tend toward consolidation:

Previously, of course, large numbers of books have been accessible in large libraries, with older books imposing their contexts on each new release. The network of contents encompassing book knowledge is as old as the book itself. But direct access to the enormous and constantly growing abundance of information and contents via the new information and communication technologies shapes new knowledge landscapes and even allows new forms of knowledge power to emerge.

Theorists of networks like Albert-Laszlo Barabasi have demonstrated impressively how nodes of information do not form a balanced, level field. The more strongly they are linked, the more they tend to constitute just a few outstandingly prominent nodes where a substantial portion of the total information flow is bundled together. The result is the radical antithesis of visions of an egalitarian cyberspace.

He then trains his sights on the “long tail,” that egalitarian business meme propogated by Chris Anderson’s new book, which posits that the new information economy will be as kind, if not kinder, to small niche markets as to big blockbusters. Wischenbart is not so sure:

He then trains his sights on the “long tail,” that egalitarian business meme propogated by Chris Anderson’s new book, which posits that the new information economy will be as kind, if not kinder, to small niche markets as to big blockbusters. Wischenbart is not so sure:

…there exists a massive problem in both the structure and economics of cultural linkage and transfer, in the cultural networks existing beyond the powerful nodes, beyond the high peaks of the bestseller lists. To be sure, the diversity found below the elongated, flattened curve does constitute, in the aggregate, approximately one half of the total market. But despite this, individual authors, niche publishing houses, translators and intermediaries are barely compensated for their services. Of course, these multifarious works are produced, and they are sought out and consumed by their respective publics. But the “long tail” fails to gain a foothold in the economy of cultural markets, only to become – as in the 18th century – the province of the amateur. Such is the danger when our attention is drawn exclusively to dominant productions, and away from the less surveyable domains of cultural and knowledge associations.

John Cassidy states it more tidily in the latest New Yorker:

There’s another blind spot in Anderson’s analysis. The long tail has meant that online commerce is being dominated by just a few businesses — mega-sites that can house those long tails. Even as Anderson speaks of plentitude and proliferation, you’ll notice that he keeps returning for his examples to a handful of sites — iTunes, eBay, Amazon, Netflix, MySpace. The successful long-tail aggregators can pretty much be counted on the fingers of one hand.

Many have lamented the shift in publishing toward mega-conglomerates, homogenization and an unfortunate infatuation with blockbusters. Many among the lamenters look to the Internet, and hopeful paradigms like the long tail, to shake things back into diversity. But are the publishing conglomerates of the 20th century simply being replaced by the new Internet hyper-nodes of the 21st? Does Google open up more “lateral connections” than Bertelsmann, or does it simply re-aggregate and propogate the existing inequities? Wischenbart suspects the latter, and cautions those like Jeanneney who would seek to compete in the same mode:

If, when breaking into the digital knowledge society, European initiatives (for instance regarding the digitalization of books) develop positions designed to counteract the hegemonic status of a small number of monopolistic protagonists, then it cannot possibly suffice to set a corresponding European pendant alongside existing “hyper nodes” such as Amazon and Google. We have seen this already quite clearly with reference to the publishing market: the fact that so many globally leading houses are solidly based in Europe does nothing to correct the prevailing disequilibrium between cultures.

google and the myth of universal knowledge: a view from europe

I just came across the pre-pub materials for a book, due out this November from the University of Chicago Press, by Jean-Noël Jeanneney, president of the Bibliothè que Nationale de France and famous critic of the Google Library Project. You’ll remember that within months of Google’s announcement of partnership with a high-powered library quintet (Oxford, Harvard, Michigan, Stanford and the New York Public), Jeanneney issued a battle cry across Europe, warning that Google, far from creating a universal world library, would end up cementing Anglo-American cultural hegemony across the internet, eroding European cultural heritages through the insidious linguistic uniformity of its database. The alarm woke Jacques Chirac, who, in turn, lit a fire under all the nations of the EU, leading them to draw up plans for a European Digital Library. A digitization space race had begun between the private enterprises of the US and the public bureaucracies of Europe.

I just came across the pre-pub materials for a book, due out this November from the University of Chicago Press, by Jean-Noël Jeanneney, president of the Bibliothè que Nationale de France and famous critic of the Google Library Project. You’ll remember that within months of Google’s announcement of partnership with a high-powered library quintet (Oxford, Harvard, Michigan, Stanford and the New York Public), Jeanneney issued a battle cry across Europe, warning that Google, far from creating a universal world library, would end up cementing Anglo-American cultural hegemony across the internet, eroding European cultural heritages through the insidious linguistic uniformity of its database. The alarm woke Jacques Chirac, who, in turn, lit a fire under all the nations of the EU, leading them to draw up plans for a European Digital Library. A digitization space race had begun between the private enterprises of the US and the public bureaucracies of Europe.

Now Jeanneney has funneled his concerns into a 96-page treatise called Google and the Myth of Universal Knowledge: a View from Europe. The original French version is pictured above. From U. Chicago:

Jeanneney argues that Google’s unsystematic digitization of books from a few partner libraries and its reliance on works written mostly in English constitute acts of selection that can only extend the dominance of American culture abroad. This danger is made evident by a Google book search the author discusses here–one run on Hugo, Cervantes, Dante, and Goethe that resulted in just one non-English edition, and a German translation of Hugo at that. An archive that can so easily slight the masters of European literature–and whose development is driven by commercial interests–cannot provide the foundation for a universal library.

Now I’m no big lover of Google, but there are a few problems with this critique, at least as summarized by the publisher. First of all, Google is just barely into its scanning efforts, so naturally, search results will often come up threadbare or poorly proportioned. But there’s more that complicates Jeanneney’s charges of cultural imperialism. Last October, when the copyright debate over Google’s ambitions was heating up, I received an informative comment on one of my posts from a reader at the Online Computer Library Center. They had recently completed a profile of the collections of the five Google partner libraries, and had found, among other things, that just under half of the books that could make their way into Google’s database are in English:

More than 430 languages were identified in the Google 5 combined collection. English-language materials represent slightly less than half of the books in this collection; German-, French-, and Spanish-language materials account for about a quarter of the remaining books, with the rest scattered over a wide variety of languages. At first sight this seems a strange result: the distribution between English and non-English books would be more weighted to the former in any one of the library collections. However, as the collections are brought together there is greater redundancy among the English books.

Still, the “driven by commercial interests” part of Jeanneney’s attack is important and on-target. I worry less about the dominance of any single language (I assume Google wants to get its scanners on all books in all tongues), and more about the distorting power of the market on the rankings and accessibility of future collections, not to mention the effect on the privacy of users, whose search profiles become company assets. France tends much further toward the enlightenment end of the cultural policy scale — witness what they (almost) achieved with their anti-DRM iTunes interoperability legislation. Can you imagine James Billington, of our own Library of Congress, asserting such leadership on the future of digital collections? LOC’s feeble World Digital Library effort is a mere afterthought to what Google and its commercial rivals are doing (they even receive private investment from Google). Most public debate in this country is also of the afterthought variety. The privatization of public knowledge plows ahead, and yet few complain. Good for Jeanneney and the French for piping up.